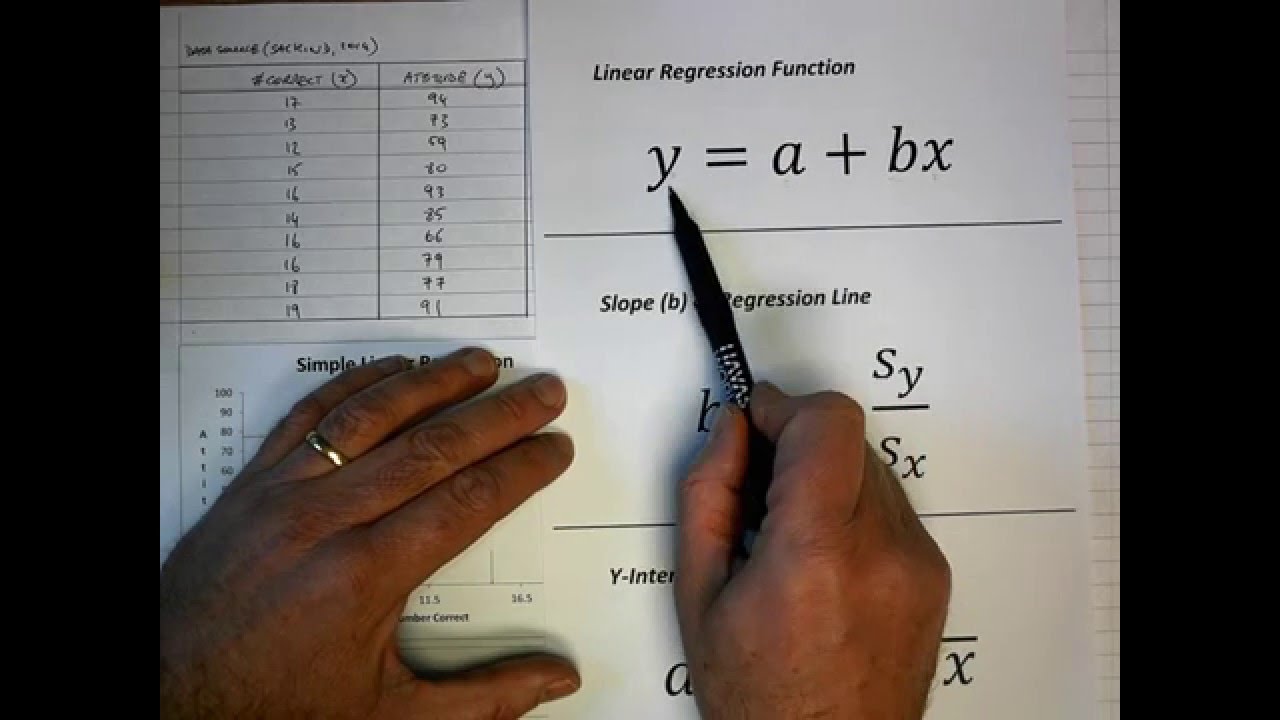

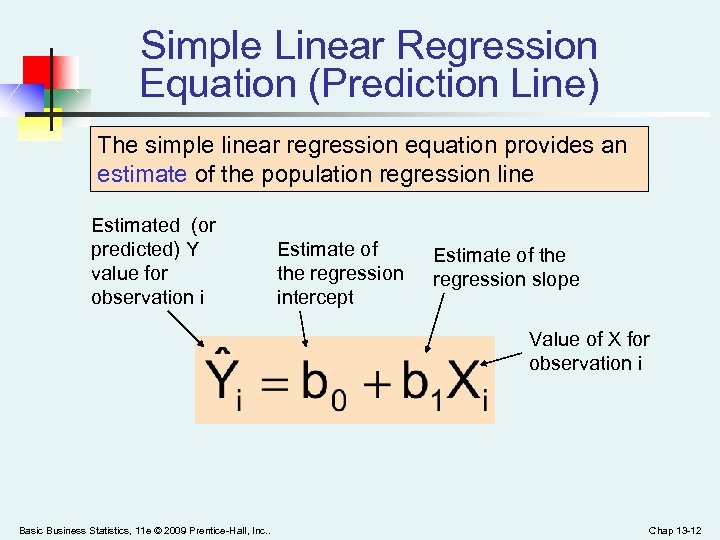

Regression analysis aims to minimize the SSE-the smaller the error, the better the regression’s estimation power. Where \(\varepsilon_i\) is the difference between the actual value of the dependent variable and the predicted value: The SSE calculation uses the following formula: The sum of squares error ( SSE) or residual sum of squares (RSS, where residual means remaining or unexplained ) is the difference between the observed and predicted values. If SSR equals SST, our regression model perfectly captures all the observed variability, but that’s rarely the case. Mathematically, the difference between variance and SST is that we adjust for the degree of freedom by dividing by n–1 in the variance formula. But SST measures the total variability of a dataset, commonly used in regression analysis and ANOVA. Think of it as the dispersion of the observed variables around the mean-similar to the variance in descriptive statistics. The sum of squares total ( SST ) or the total sum of squares (TSS) is the sum of squared differences between the observed dependent variables and the overall mean. This article addresses SST, SSR, and SSE in the context of the ANOVA framework, but the sums of squares are frequently used in various statistical analyses.

The Confusion between the Different Abbreviations.But first, ensure you’re not mistaking regression for correlation. We define SST, SSR, and SSE below and explain what aspects of variability each measure. The decomposition of variability helps us understand the sources of variation in our data, assess a model’s goodness of fit, and understand the relationship between variables. We decompose variability into the sum of squares total (SST), the sum of squares regression (SSR), and the sum of squares error (SSE). It indicates the dispersion of data points around the mean and how much the dependent variable deviates from the predicted values in regression analysis.

The sum of squares is a statistical measure of variability.